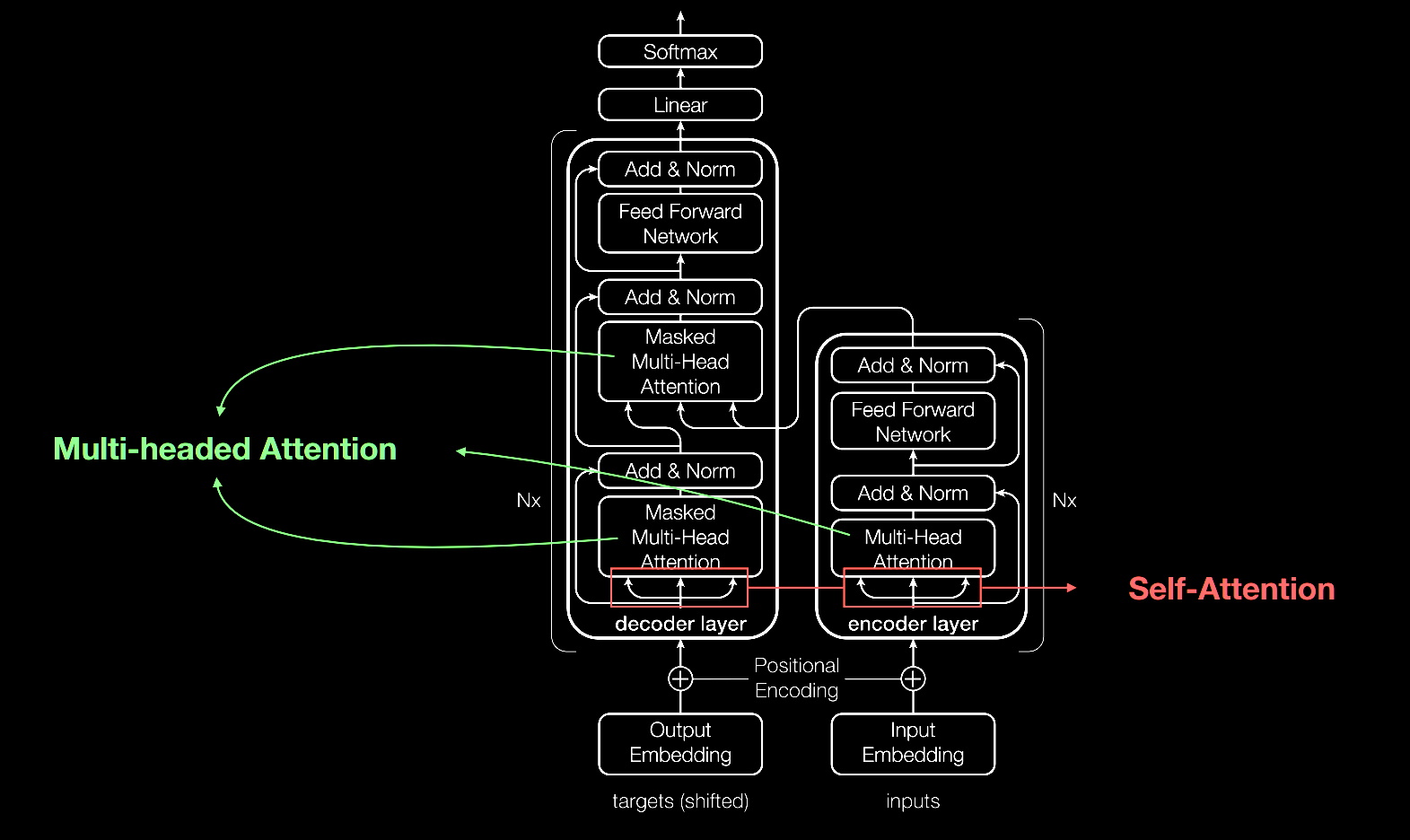

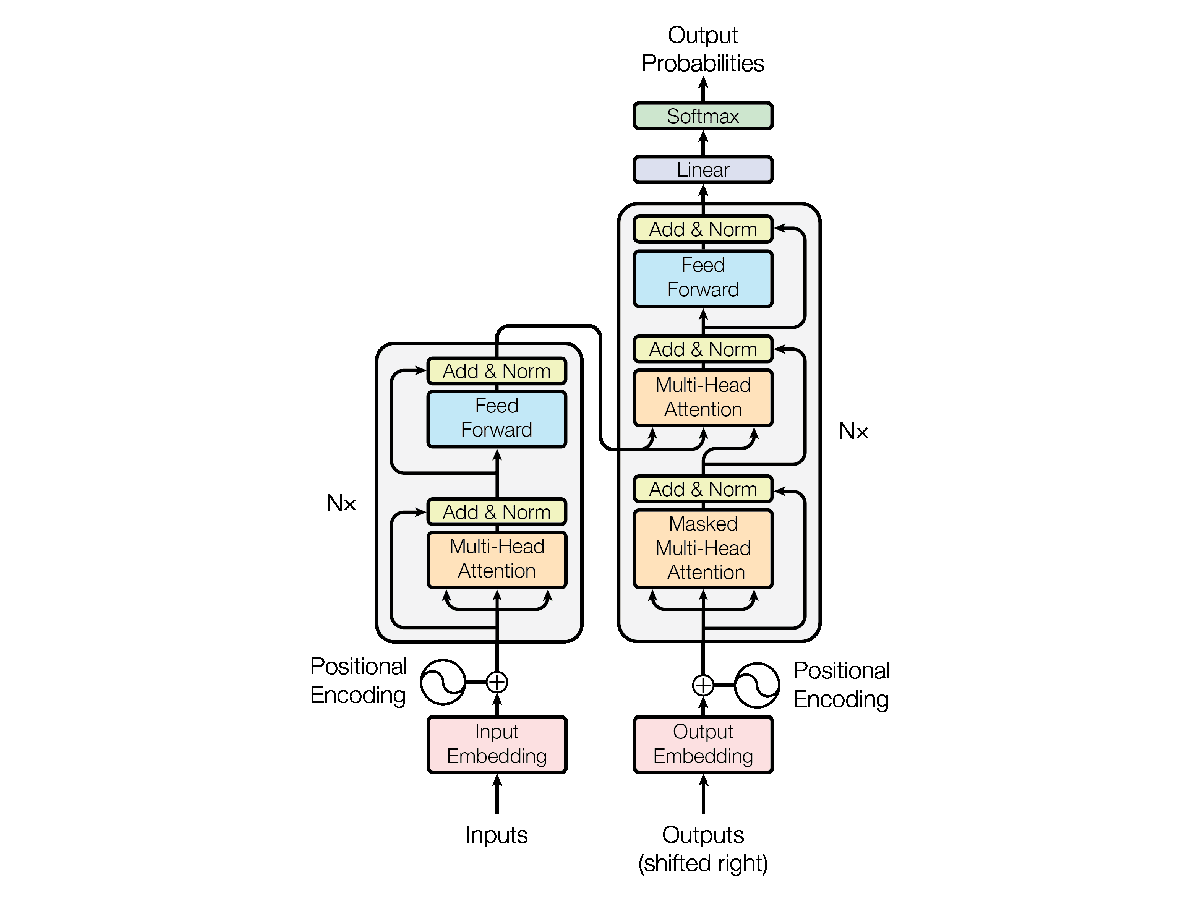

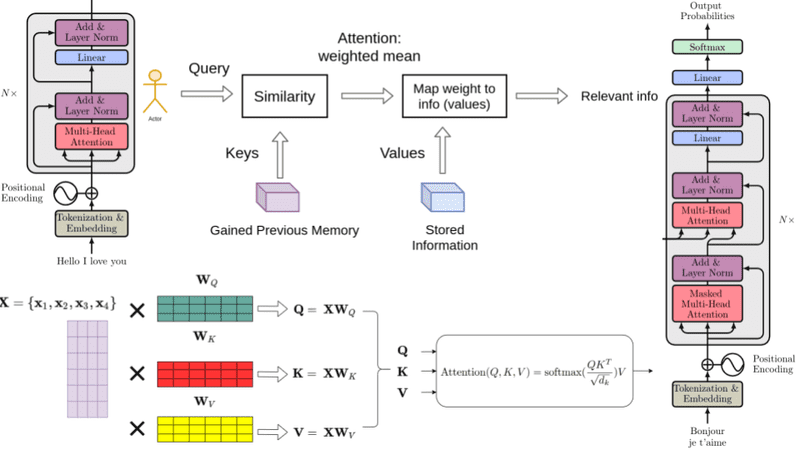

A Transformer is a model architecture that eschews recurrence and instead relies entirely on an attention mechanism to draw global dependencies between input and output. Before Transformers, the dominant sequence transduction models were based on complex recurrent or convolutional neural networks that include an encoder and a decoder. The Transformer also employs an encoder and decoder, but removing recurrence in favor of attention mechanisms allows for significantly more parallelization than methods like RNNs and CNNs.

What Is a Transformer Model?

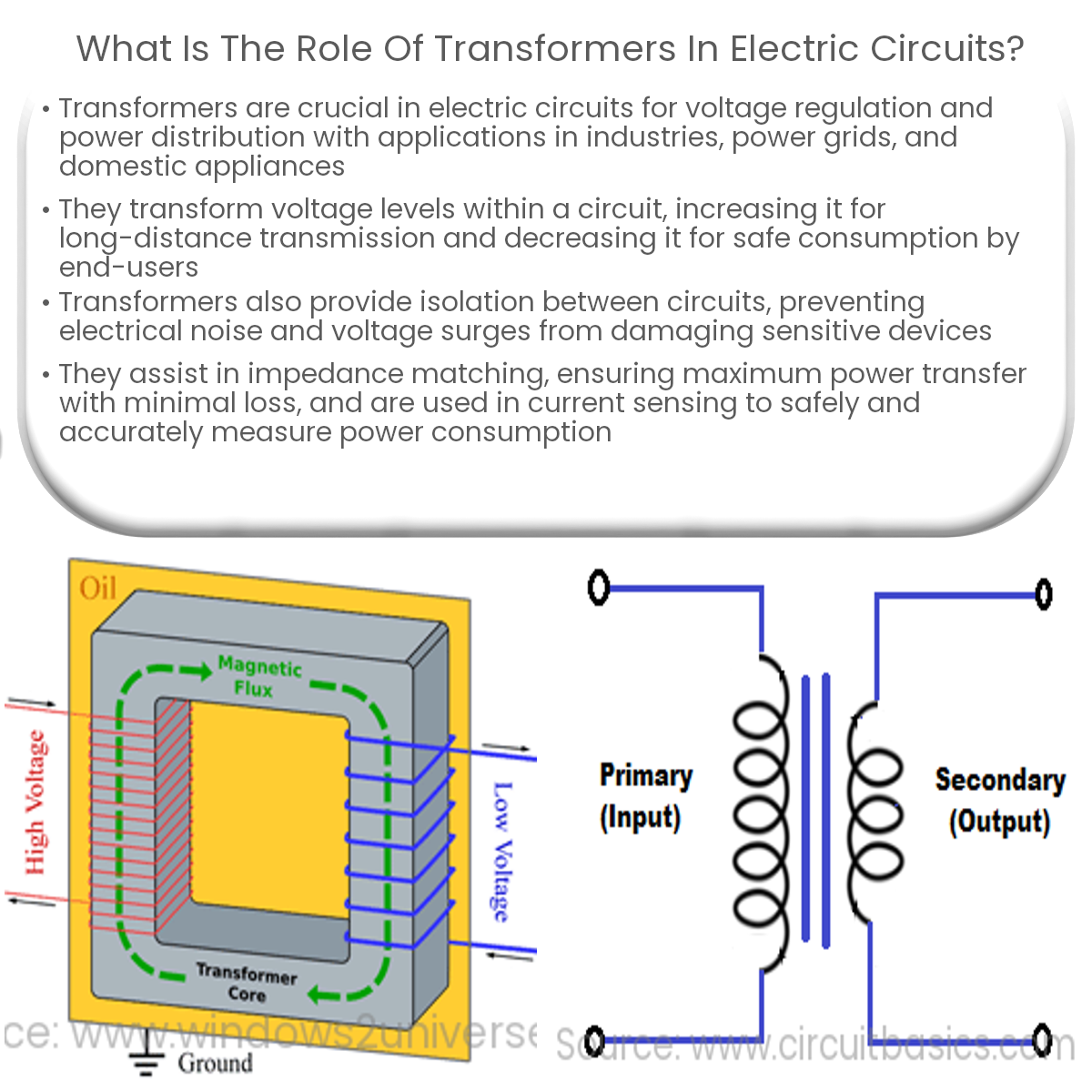

What is the role of transformers in electric circuits?

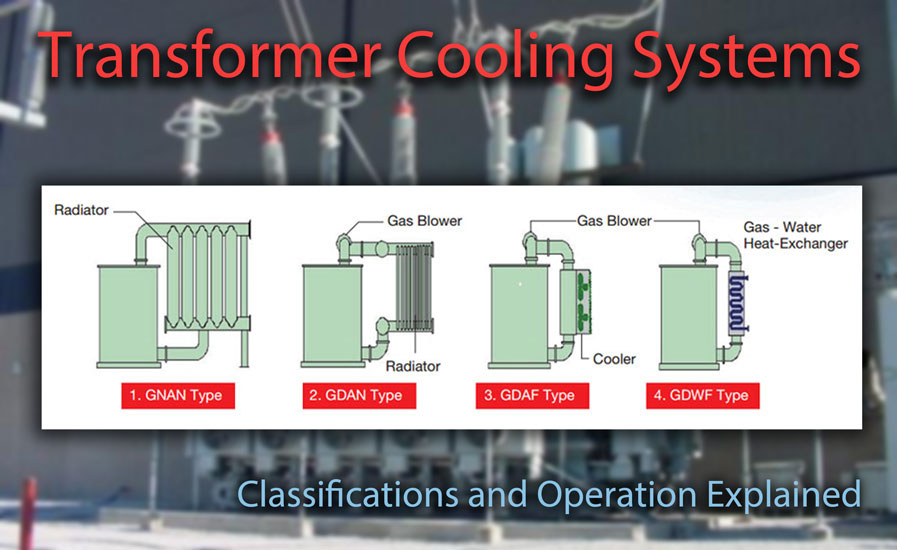

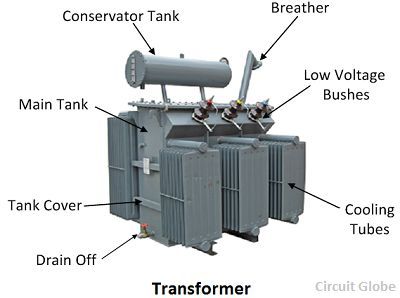

Transformer Cooling Systems and Methods Explained - Articles - TestGuy Electrical Testing Network

Understanding the Transformer Model: A Breakdown of “Attention is All You Need”, by Srikari Rallabandi, MLearning.ai

Transformers BART Model Explained for Text Summarization

Transformer Architecture explained, by Amanatullah

What is a Transformer? - definition and meaning - Circuit Globe

Explain the need for Positional Encoding in Transformer models (with Example)

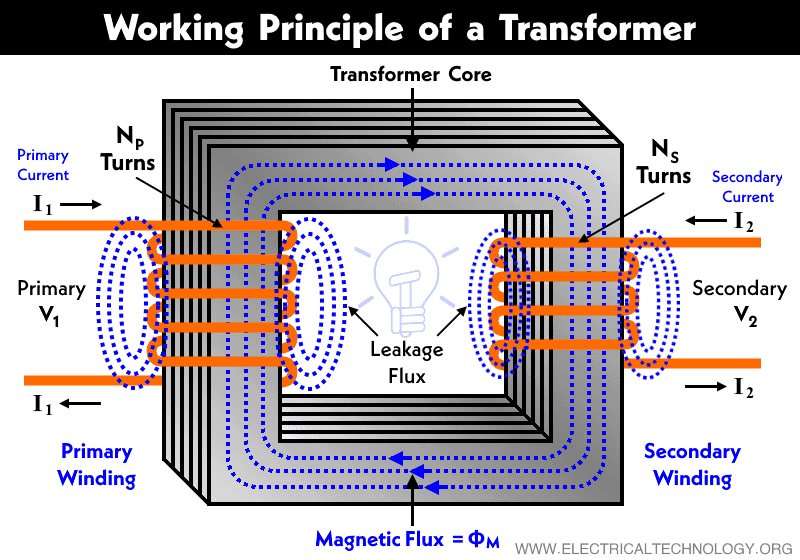

What is a Transformer? Transformers Explained - Working Principle ( Transformer Tutorial)

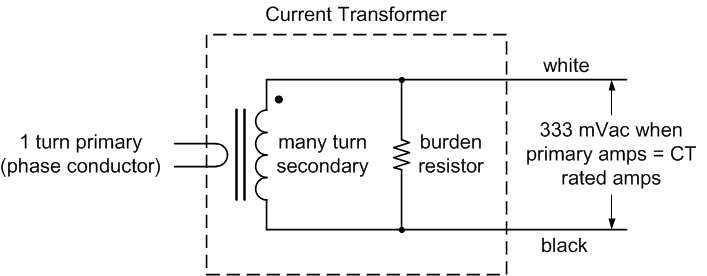

Current Transformers (CTs) Explained - Continental Control Systems, LLC

Transformer Architecture Explained, Attention Is All You Need

What is a Transformer And How Do They Work?, Transformer Working Principle